PCCOE

Department of Computer Engineering

2025–26

The Evolution of AI

Discover the transformative journey from rigid rule-based systems to sophisticated generative models that understand, learn, and create.

● Presented by PCCOE Computer Department

The AI Evolution Timeline

From 1943 to 2025: Transformative breakthroughs that shaped modern AI

Artificial Neurons

McCulloch-Pitts neuron model introduced

Rule-Based Systems

Expert systems with explicit rules for AI applications

Backpropagation

Backpropagation algorithm revolutionized neural network training

Deep Learning

Deep neural networks transformed pattern recognition and machine learning

GANs Introduced

Generative Adversarial Networks enabled synthetic data creation

Transformers

Attention mechanisms revolutionized NLP and language models

Diffusion Models

DALL-E 2, Midjourney revolutionized AI image generation with diffusion-based models

Advanced AGI Era

Multimodal AI systems with advanced reasoning, real-time processing, and human-level problem solving

Watch as AI evolves through each era. Each milestone represents a breakthrough that shaped modern artificial intelligence.

Rule-Based Systems & Expert Systems

The genesis of artificial intelligence began with rule-based systems, also known as expert systems, which dominated AI research from the 1940s through the 1980s. These pioneering systems encoded human expertise into explicit "if-then" rules that computers could execute deterministically.

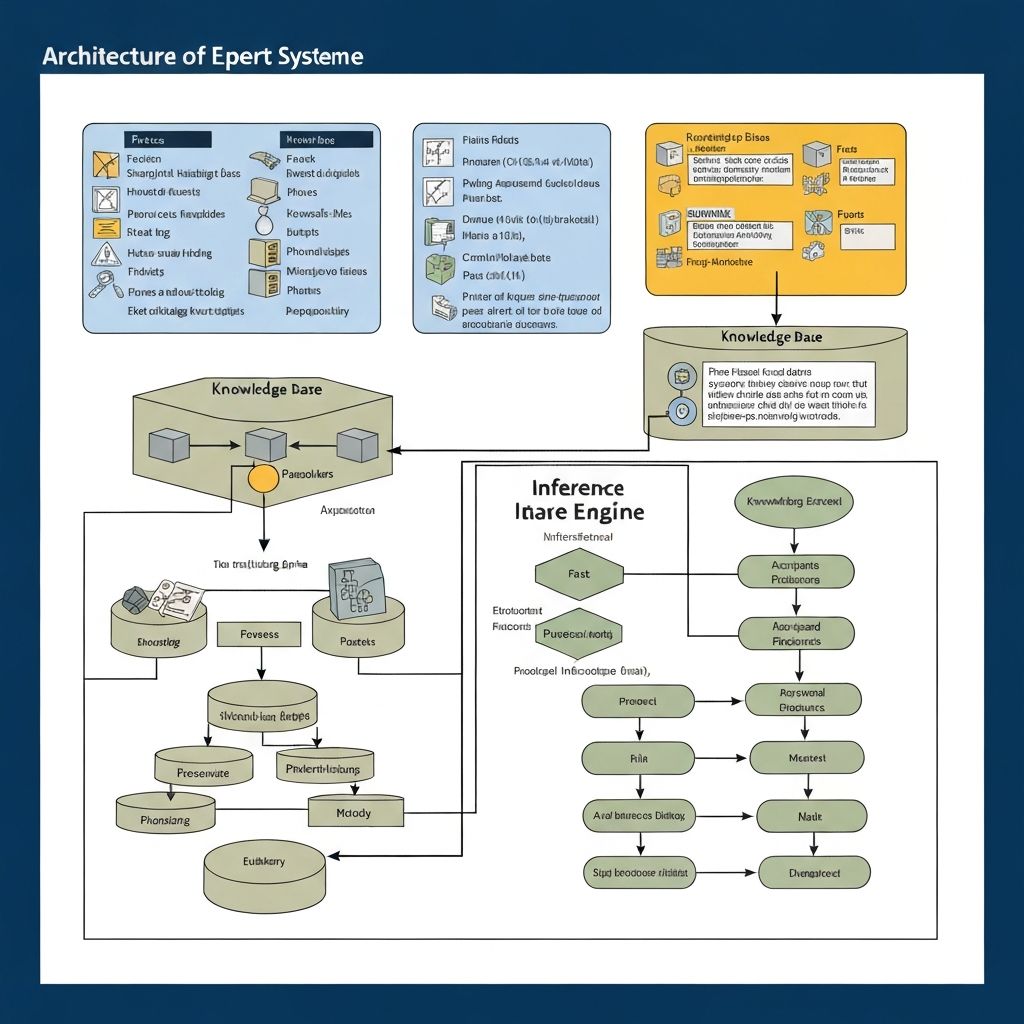

Architecture of Rule-Based Systems

Knowledge Base

Stores domain-specific expert rules and facts that govern system behavior.

Inference Engine

Applies rules to infer conclusions from input data systematically using logical deduction.

Working Memory

Holds dynamic facts being processed during system execution and reasoning.

User Interface

Interacts with humans for input and output of expert system results.

Architecture of Expert Systems

Core components showing knowledge representation, inference logic, and system interaction

Pioneering Examples & Milestones

1950s–60s

General Problem Solver (GPS)

Developed by Newell and Simon, GPS revolutionized AI by breaking problems into logical steps.

1965

DENDRAL

Pioneering molecular structure prediction system—enabled automated chemical analysis.

1970s

MYCIN

Medical expert system with ~600 rules diagnosing infections, matching expert doctor performance.

✓Strengths

- •Explainable reasoning - Users understand why decisions were made

- •Predictable behavior - Rule-driven logic ensures consistency

- •High accuracy - Performs exceptionally in narrow, well-defined domains

✗Limitations

- •Rigid structure - Fails completely outside defined rules

- •Poor scalability - Exponentially harder as rule complexity grows

- •No learning - Manual updates required for every new scenario

Why This Led to Machine Learning

The brittleness of rule-based systems became apparent by the 1980s. Complex domains required exponentially more rules, and any situation outside the rule set would cause complete failure. The community realized that instead of manually encoding every rule, systems needed to learn patterns from data. This fundamental shift sparked the move toward machine learning and neural networks in the 1990s.

The Shift to Machine Learning

The 1990s witnessed a paradigm shift from rigid rules to data-driven learning. Neural networks, with foundations laid in the mid-20th century, resurged with the rediscovery of backpropagation, enabling systems to learn patterns directly from data.

Neural Networks: The Building Blocks Timeline

1943

McCulloch-Pitts Model

Introduces the mathematical model of artificial neurons, foundational to all neural networks

1958

Rosenblatt's Perceptron

First neural network demonstrating pattern recognition, sparked significant interest in AI

1969

AI Winter Begins

Minsky & Papert's 'Perceptrons' book highlights limitations, research funding dries up for nearly a decade

1986

Backpropagation Revival

Rumelhart, Hinton & Williams rediscover backpropagation, enabling training of deep multilayer networks efficiently

1989

LeNet & Convolutional Networks

Yann LeCun applies CNNs to handwriting recognition—first practical deep learning success

Machine Learning Paradigms

Supervised Learning

Trains on labeled input-output pairs to learn mapping functions.

Unsupervised Learning

Finds patterns in unlabeled data, discovering hidden structures.

Reinforcement Learning

Learns by trial and error, optimizing for cumulative rewards.

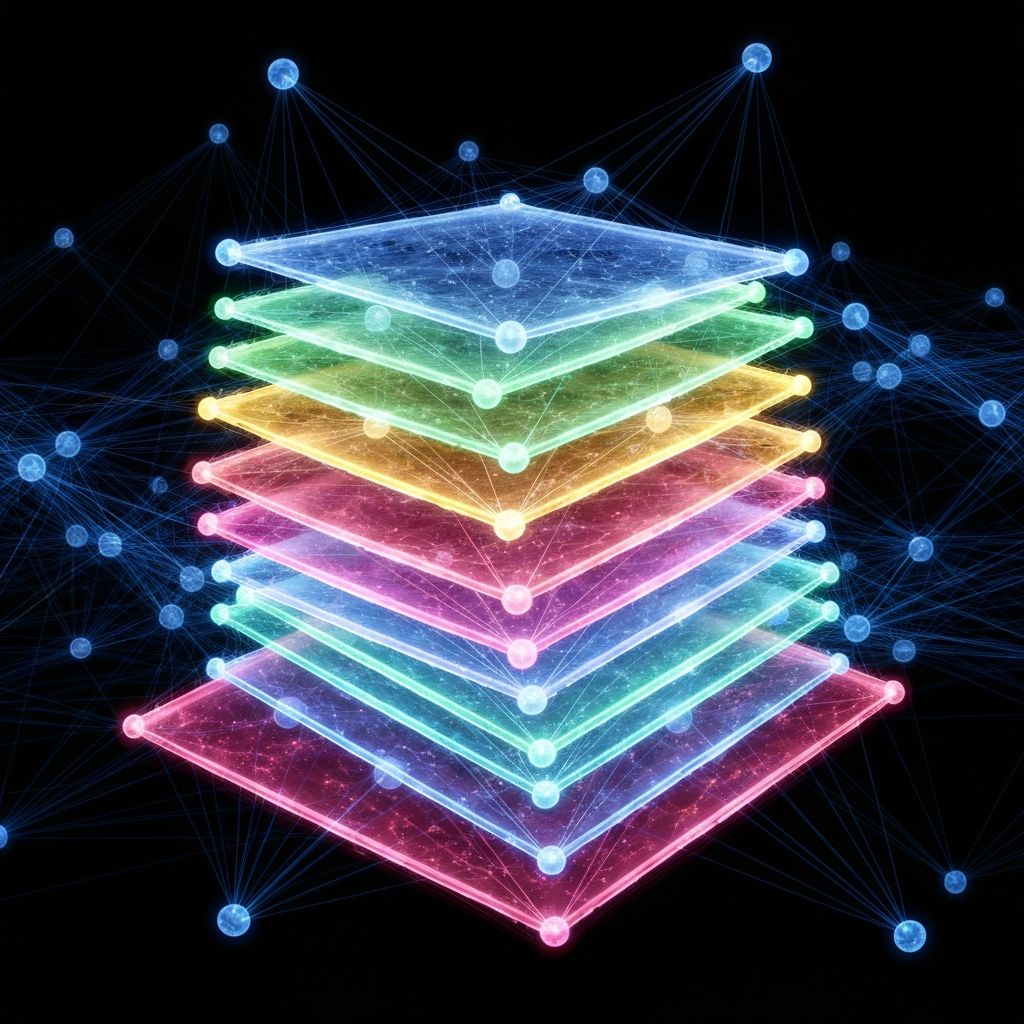

Neural Network Architecture

Interconnected nodes and layers enable learning from data patterns through backpropagation

Why This Led to Deep Learning

By the 2000s, computing power had increased exponentially, and datasets grew massive. Researchers discovered that deeper networks with more layers could learn increasingly abstract patterns. However, GPUs weren't widely used for training yet, making deep networks infeasible. Once GPU acceleration became available around 2006, deep learning would explode onto the scene.

The Deep Learning Revolution

The mid-2000s introduced deep learning, revolutionizing tasks like image and speech recognition through GPU acceleration and breakthrough architectures.

GPU Acceleration

Graphics processors drastically reduced training from months to weeks

100x faster

DanNet (2011)

Achieved superhuman image recognition capabilities

Superhuman accuracy

ImageNet 2012 - AlexNet

Won ImageNet challenge with unprecedented accuracy

85.2% accuracy

Convolutional Neural Networks

Hierarchical feature extraction from raw pixels to abstract patterns enables superhuman image recognition

2012 AlexNet Revolution

Key Developments in Deep Learning

GPU Acceleration

Dramatically accelerated training, enabling models to learn massive datasets in weeks

Deep Architectures

Enabled capture of complex hierarchical features from pixels to abstract concepts

Transfer Learning

Pre-trained models solve new problems faster with dramatically less data needed

Convolutional Layers

Became standard for vision tasks with pooling for efficiency and dimensionality reduction

The Age of Generative Models

Models that could generate diverse, realistic outputs fundamentally transformed creative and analytical AI capabilities.

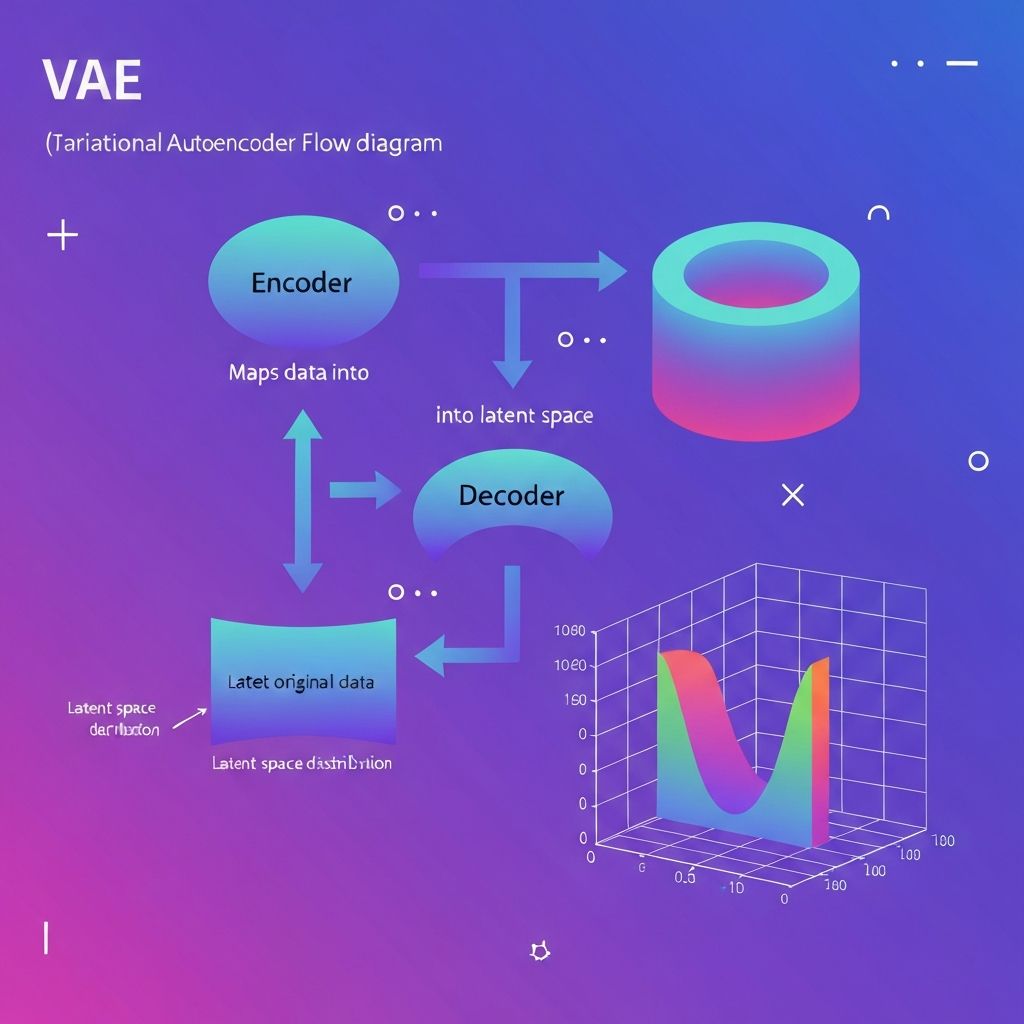

Variational Autoencoders (VAEs)

VAEs introduced probabilistic latent spaces, enabling generation of diverse outputs from learned distributions. Became crucial for image generation, anomaly detection, and drug discovery.

Probabilistic Generation Flow

Encodes to distribution, samples for diversity, decodes to generate novel outputs

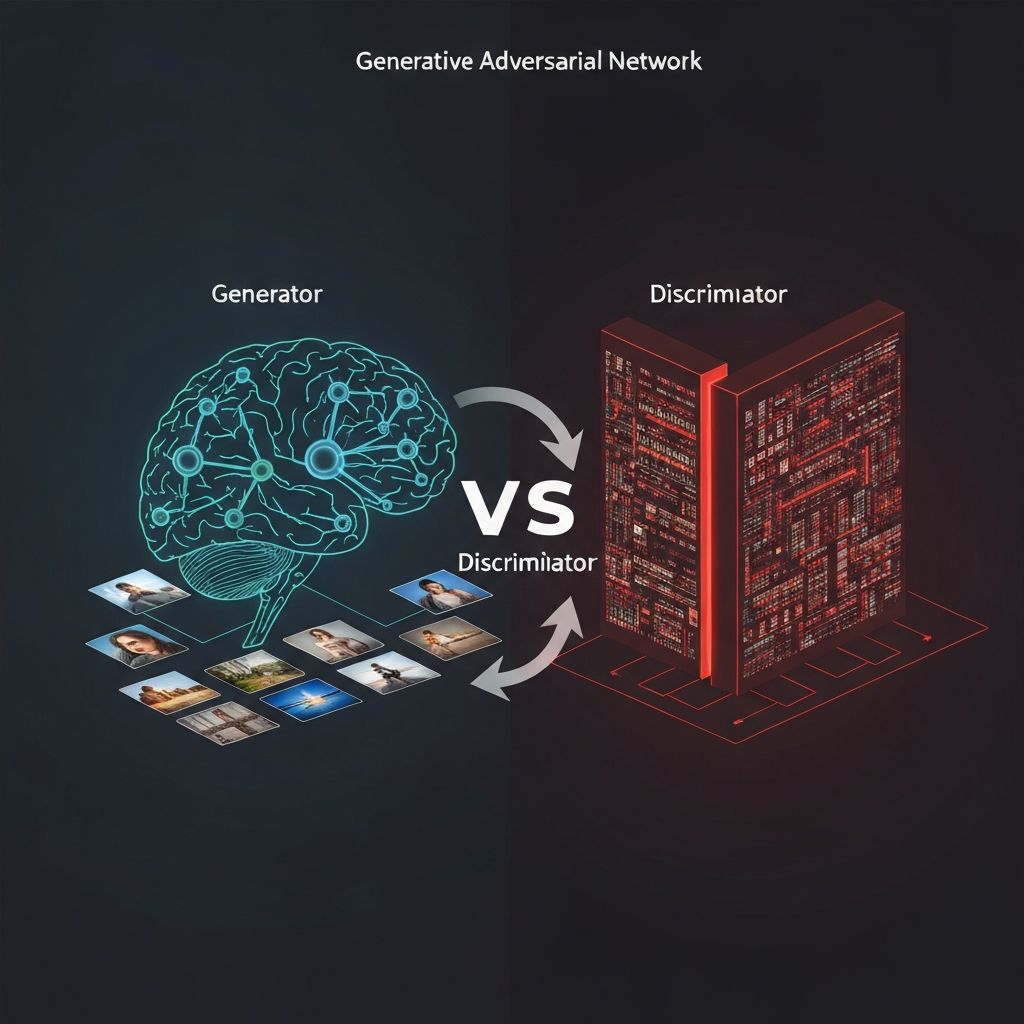

Generative Adversarial Network

Competitive learning between generator and discriminator produces photorealistic images

Generative Adversarial Networks (GANs)

Introduced in 2014 by Ian Goodfellow, GANs transformed generative AI through competitive dynamics between generator and discriminator, enabling highly realistic image synthesis.

Generator

Creates synthetic images attempting to fool the discriminator

Discriminator

Distinguishes real images from generated ones

Generative Model Comparison

🔹VAEs

⚔️GANs

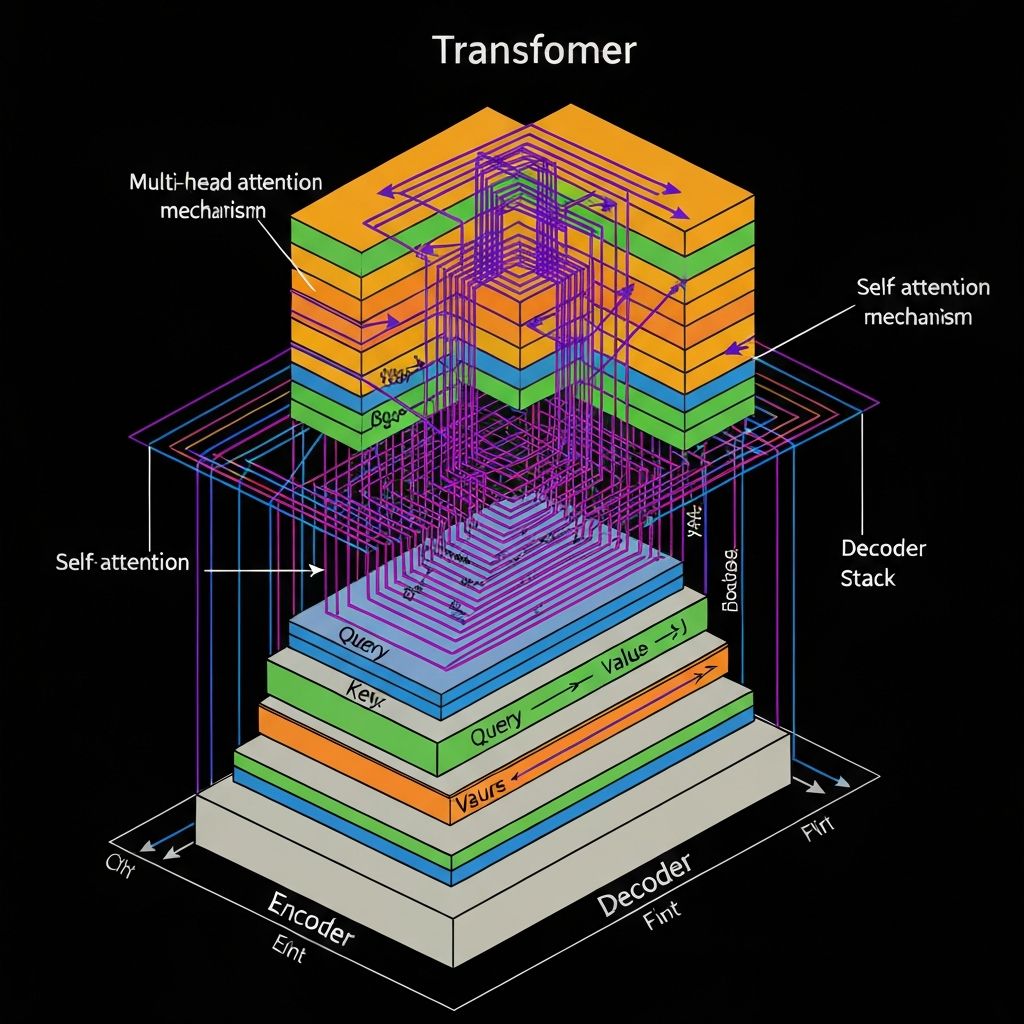

Transformer Architecture Breakthrough

Transformers revolutionized AI by replacing recurrent architectures with attention mechanisms, enabling parallel processing of sequences and capturing long-range dependencies with unprecedented efficiency.

Transformer Architecture

Self-attention enables parallel processing and long-range dependency capture

Why Transformers Changed Everything

Transformers revolutionized AI by replacing recurrent architectures with attention mechanisms, enabling parallel processing of sequences and capturing long-range dependencies with unprecedented efficiency.

Attention Mechanism

Allows models to focus on relevant parts, understanding context and relationships better

Parallel Processing

Processes entire sequences simultaneously, dramatically increasing speed

Long-Range Dependencies

Captures relationships between distant tokens essential for language understanding

Transfer Learning

Pre-trained models fine-tune for diverse tasks with minimal additional data

Language Models: BERT & GPT Series

BERT (2018)

Introduced bidirectional context understanding, revolutionizing comprehension tasks

- •Bidirectional training

- •Superior language understanding

- •Pre-training framework

GPT Series (2018–2023)

Scaled parameters massively, achieving advanced generation. GPT-4 added vision capabilities

- •Large-scale generation

- •Few-shot learning

- •Multimodal reasoning (GPT-4)

Diffusion Models: The Latest Breakthrough

Emerging around 2015 and refined through the 2020s, diffusion models generate data by reversing a gradual noising process, overcoming GAN limitations with more stable training.

✓Advantages Over GANs

- •Stable training

- •Realistic outputs

- •Better convergence

- •Easier to debug

🎨Notable Models

- •DALL-E 2

- •Stable Diffusion

- •Midjourney

- •Modern generative AI foundation

Current Landscape & Future Outlook

AI has entered a transformative phase where generative systems achieve rapid global adoption, powering creative, analytical, and collaborative tasks at unprecedented scale.

The Current Landscape

Multimodal Intelligence

Models now process and understand text, images, audio, and video simultaneously.

Hybrid Techniques

Combining multiple approaches for more robust and capable systems.

Reasoning-Driven Behavior

Moving beyond pattern matching toward genuine understanding and reasoning.

Rapid Adoption

ChatGPT achieved 100M users faster than any previous application.

Persistent Challenges

High Compute Demands

Training modern models requires enormous computational resources, limiting accessibility and increasing environmental impact.

Bias & Fairness

Models can perpetuate societal biases present in training data, affecting fairness across demographics.

Interpretability Gaps

Understanding how and why models make decisions remains difficult—the persistent 'black box' problem.

Ethics & Safety

Concerns about AI misuse, misinformation, job displacement, and ensuring safe, beneficial AI deployment.

The Path Forward

Efficient Models

Creating capable models with lower computational costs, faster inference, and reduced environmental footprint.

Domain Specialization

Building tailored models optimized for specific industries—healthcare, finance, scientific research.

Human-AI Collaboration

Designing systems that augment rather than replace human capability, creating symbiotic partnerships.

Governance & Safety

Establishing frameworks for responsible AI deployment with transparency, accountability, and ethical guardrails.

The Evolution Continues

From rule-based logic to generative intelligence, AI has evolved from following instructions to learning and creating. This transformation represents more than technological progress—it marks a fundamental shift from automation to human augmentation.

As AI advances toward deeper understanding, enhanced reliability, and responsible deployment across industries, the most exciting chapter may still be ahead. The convergence of multiple technologies, improved efficiency, and ethical frameworks will define the next era of artificial intelligence.

"The future of AI lies not in creating machines that replace humanity, but in building tools that enhance human potential and solve humanity's greatest challenges."

Ready to dive deeper?

An exploration of artificial intelligence evolution by the Department of Computer Engineering, PCCOE • 2025–26